(This is post 5 of 5 in the Broadening Participation and Broader Impacts Series)

The terms broadening participation and broader impacts have been used extensively in science, technology, engineering, and mathematics (STEM) disciplines, especially within STEM funding solicitations and proposals. Although used interchangeably at times, these two terms have their own unique history and definitions. By understanding these differences and similarities, researchers, educators, and administrators can implement and evaluate them more successfully. In this five-part series we’re calling “Broadening Participation and Broader Impacts” we’ll explore the history of these terms, their implementation, and frameworks to evaluate their success.

Over the course of this blog series, we’ve covered the history of broadening participation (post 1) and broader impacts (post 2), best practices for broadening participation (post 3), and suggestions for how to incorporate broadening participation into broader impacts activities (post 4).

As part of the National Science Foundation’s (NSF) merit review process, all grant proposals are evaluated against two criteria: intellectual merit and broader impacts. As stated in an earlier post, but to reiterate it here; of the five groups of activities identified by NSF to meet the broader impacts criteria, only one area, broadening participation, specifically mentions gender, ethnicity, and disability. NSF has been urged by several groups to weave broadening participation issues of diversity, equity, and accessibility specifically into each of these five broader impacts criteria.

We encourage researchers to do this as well, and the following post will focus on how to evaluate broadening participation initiatives within broader impacts activities. First, we summarize how NSF focused broadening participation programs have been evaluated to serve as a framework for smaller-scale efforts.

Evaluating Focused Broadening Participation Programs

As discussed in earlier posts, NSF started creating a portfolio of programs focused on broadening participation as part of their strategic plan to be broadly inclusive. Programs in this portfolio have the explicit goal of broadening participation and the major part of the budget is dedicated to broadening participation activities. Two major strategies employed are;

- Broadening the access and success of individuals from underrepresented groups at all levels of the pipeline through provision of resources such as scholarships, fellowships, awards and interventions.

- Transforming institutional infrastructure in order to provide learning or work environments that encourage access and success of underrepresented groups in STEM.

Although NSF required grant awardees to report their findings and to conduct a project-level evaluation, by 2008 NSF realized they needed to develop and validate a strategy to better assess the value of their investment in broadening participation. From this need, NSF sponsored a workshop in April 2008 that included NSF grantees, professional evaluators, and the policy community. From this workshop information was provided to NSF on what it should require for program monitoring and for program evaluation (see the report, Framework for Evaluating Impacts of Broadening Participation Projects).

The following summary information is taken directly from the NSF report, which focused on answering two questions: “What metrics should be used for project monitoring?” and “What designs and indicators should be used for program evaluation?”.

What metrics should be used for project monitoring?

- Monitoring data to determine at short-term intervals (typically, one year or less) on whether programs are on target in meeting established benchmarks.

- Follow-up data collected on a yearly basis for the period of the intervention or even beyond.

- Augment monitoring data with other data collected specifically for evaluation purposes, for example, a retrospective survey of program participants to collect follow-up data not included in the monitoring data.

What designs and indicators should be used for program evaluation?

Unlike monitoring metrics mentioned above, evaluation normally develops research questions and impact indicators over a longer term and is situated within broader program-level goals. Ideally, the results of these data collection efforts are used by policymakers, funders, individual projects, researchers, and the practitioner community. For an overview of evaluation, you can read the STEM Center’s Intro to Evaluation series, or the NSF’s evaluation handbook (The 2010 User-Friendly Handbook for Project Evaluation).

Different Types of Indicators

- Input indicators – measures resources, both human and financial, devoted to a particular program or intervention and can include measures of characteristics of target populations.

- Process indicators – measure ways in which program services and goods are provided

- Output indicators – measure the quantity of goods and services produced and the efficiency of production

- Outcome indicators – measure the broader results achieved through the provision of goods and services. These indicators can exist at various levels: population, agency, and program.

Indicators at the Individual Level

Valid and reliable individual level data are essential for determining the causal pathways for success of broadening participation initiatives. Therefore, indicators at the individual level generally focus on various participant level data and are identified across four levels:

- Participation – the total share that participants from underrepresented groups have of the total enrollment within a particular STEM field or major.

- Persistence or retention – the number of students from underrepresented groups who both return to school and remain in a STEM field.

- Student experiences – various indicators designed to both document and understand the experiences of students from underrepresented groups which may contribute to their success (or lack thereof).

- Attitudes – a host of indicators focusing on students’ perceptions and attitudes toward STEM fields and toward themselves in relation to STEM interest and confidence.

Evaluating Broadening Participation of Informal STEM Education Projects

The broadening participation programs above focus on the access and success of underrepresented groups in STEM. Oftentimes, a program is considered successful if it increases the recruitment, retention, and persistence of underrepresented participants in a STEM degree program.

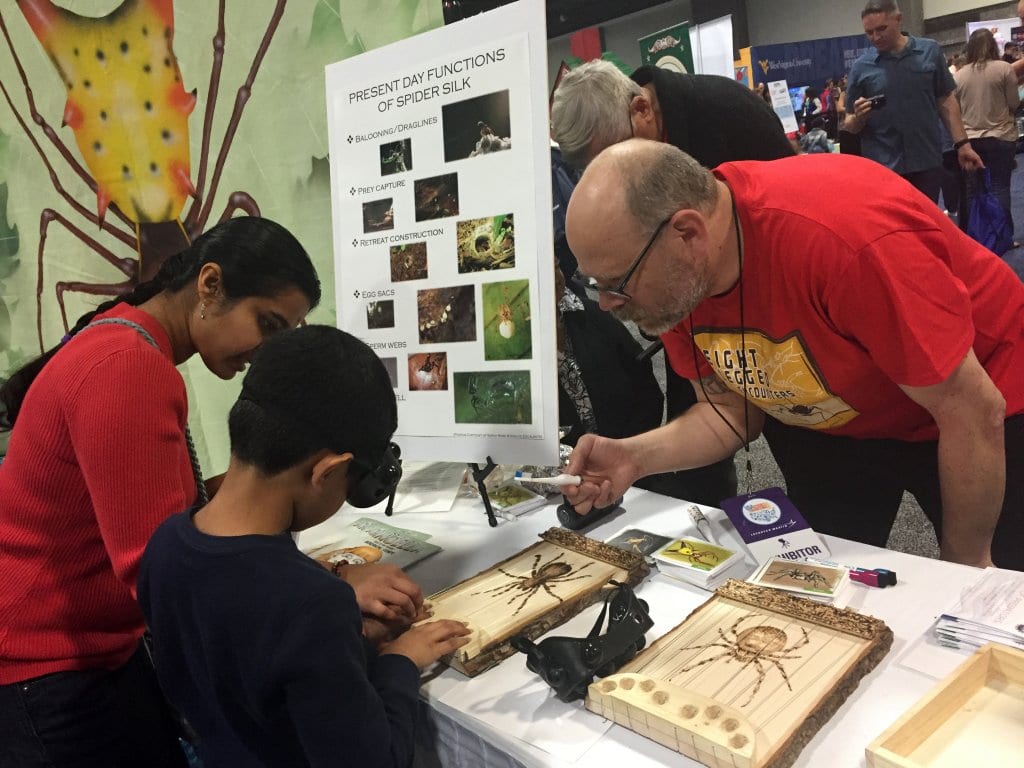

Although this is an important component of broadening participation, increasingly it has been recognized that everyone, regardless of whether they become scientists or engineers, should learn the wonders and possibilities of STEM and maintain that interest and passion throughout their lives. Creating lifelong STEM learners has been a mainstay of those researchers developing and implementing informal STEM outreach and engagement programs. These informal learning environments are also especially effective at engaging non-dominant communities in STEM when these programs are designed to be intellectually and emotionally engaging, culturally responsive, and connected to other learning experiences (NRC 2009, 2015).

We argue that broader impact activities, even though they are smaller in scope and more financially constrained compared to NSF’s focused broadening participation grant programs, are the perfect vehicle to develop and implement informal STEM learning opportunities to diverse audiences.

Developing an evaluation for an informal STEM educational program is very similar to other larger-scale programs with the same goals. For example, individual-level indicators, such as participation, participant experiences, and attitudes can be collected using a relatively straightforward evaluation plan. NSF has actually been encouraging PIs to include some kind of formal evaluation to their broader impacts activities, regardless of which broader impact subcategory they chose to pursue.

However, the very nature of informal STEM education programs can make it difficult to conduct rigorous evaluation. Some of the difficulty lies in having diffuse audiences which are difficult to identify, and multiple external factors that can’t be isolated from the program’s activities for assessment.

For these activities NSF held a workshop in March 2007 to better understand the impacts of its investments in informal science education by developing a framework for their evaluation (Framework for Evaluating Impacts of Informal Science Education Projects). For informal STEM education programs, the evaluation focus is about understanding how the experience of participating in/or engaging with the program has contributed to fostering, reinforcing, and sustaining STEM interest and understanding. We encourage informal STEM learning practitioners to review the Framework for Evaluating Impacts of Informal Science Education Projects report.

For informal STEM education programs that also seek to broaden participation, there is not a set of separate steps from a regular evaluation approach, rather a holistic framework for thinking about and conducting evaluations in a culturally responsive manner.

Culturally Responsive Evaluation

“Culturally responsive evaluators honor the cultural context in which an evaluation takes place by bringing needed, shared life experiences and understandings to the evaluation tasks at hand and hearing diverse voices and perspectives. The approach requires that evaluators critically examine culturally relevant but often neglected variables in project design and evaluation. In order to accomplish this task, the evaluator must have a keen awareness of the context in which the project is taking place and an understanding of how this context might influence the behavior of individuals in the project.”

2010 User-Friendly Guide to Project Evaluation (p. 75)

The NSF’s 2010 User-Friendly Handbook for Project Evaluation has a chapter dedicated to developing, conducting, and analyzing a culturally responsive evaluation (chapter 7, page 75). Although focused on public health, the Centers for Disease Control’s Practical Strategies for Culturally Competent Evaluation also has useful information that can be applied more broadly. We encourage all those involved in evaluations, and particularly those evaluating broadening participation efforts to read these resources in their entirety. Below we summarize some of the key points.

Engage with stakeholders throughout the process

An evaluation plan needs proper and appropriate evaluation questions. To be culturally responsive, the questions of stakeholders need to be heard and addressed. Stakeholders should include a range of people, including program participants or those affected by the program, and they should be included in all stages of the evaluation process.

People learn different things, not just different amounts

Because learners build on their existing knowledge and experience, the diversity of participant backgrounds means that project deliverables will offer a large range of possible learning outcomes. Assessments are most likely to show learning impacts if they are open-ended enough to capture this range, including unintended impacts.

Use methods that are respectful, appropriate, and comfortable

For example, it may be useful to supplement participant interviews with alternative forms of assessment, such as drawings, taking photographs, sorting tasks, narratives, or think-alouds.

Consider cultural context when analyzing data

Obtaining stakeholder input while analyzing evaluation data is a good strategy in order to capture the nuances in how language is expressed and the meaning it may hold for various cultures.

“Data do not speak for themselves nor are they self-evident; rather, they are given voice by those who interpret them. The voices that are heard are not only those who are participating in the project, but also those of the analysts who are interpreting and presenting the data.”

2010 User-Friendly Guide to Project Evaluation (p. 91)

Examples of Inclusive Data Collection

The Science Museum of Minnesota, in conjunction with Campbell-Kibler Associates developed a website, Beyond Rigor: Improving Evaluations with Diverse Populations, that provides tips on designing, implementing, and assessing the quality of evaluations on programs and projects to improve quality, quantity, and diversity in STEM. Below are some general tips to make the data collection process more inclusive.

Ask for demographic information at the end

Begin with an interesting question that sets the tone for the measure and makes respondents feel their opinions are important to you. Research has found that asking demographic information at the beginning of a measure can impact participant response, particularly those of people of color and white women (Danaher & Crandall, 2008).

Have participants define their own race/ethnicity and disability status

Don’t have the identification done by data collectors or project/program staff. If the funder requires that a standard set of categories for race/ethnicity and/or disability be used, use those categories but also, in an open-ended question, ask participants to indicate their own race/ethnicity and disability status.

Review the physical space

Prior to collecting data from participants, review the physical space to make sure that the dècor does not reflect stereotypes and is both comfortable and inviting to the target groups. This includes being accessible to people with disabilities.

Review the oral and written introductions prior to data collection

This will help identify potential triggers to stereotype threat. Don’t mention any gender or race differences that have been found in tests or surveys being used.

Use accessibility checkers

An accessible measure is one that is available to as many people, with and without disabilities, as possible. If your software supports it, use Microsoft’s Accessibility Checker to check for accessibility. If your measures are web-based, a number of tools to check accessibility can be found at the web site of the World Wide Web Consortium.

More Evaluation Resources & Networks

Since NSF’s 2007 workshop a vast network and community has developed around STEM informal educational programs and their evaluation. Due to the myriad of informal educational programs and the specificity of the evaluation to match these programs there are resources for common types of STEM informal educational programs. Below is a list of some of these.

The Center for Advancement of Informal Science Education (CAISE)

The Center for Advancement of Informal Science Education (CAISE) is a portal to project, research, and evaluation resources designed to support and connect the informal STEM education community in museums, media, public programs and a growing variety of learning environments. CAISE is an NSF-funded resource center for the Advancing Informal STEM Learning (AISL) program.

The American Evaluation Association (AEA)

The American Evaluation Association is an international professional association of evaluators devoted to the application and exploration of program evaluation, personnel evaluation, technology, and many other forms of evaluation. The AEA also hosts the AEA Public Library where members of the community post instruments, presentations, reports, and more. AEA has a number of topical interest groups (TIGs) that may be of particular interest to informal science education evaluators.

Visitor Studies Association (VSA)

The Visitor Studies Association (VSA) is a professional organization focused on all facets of the visitor experience in museums, zoos, nature centers, visitor centers, historic sites, parks and other informal learning settings. The organization is committed to understanding and enhancing visitor experiences in informal learning settings through research, evaluation, and dialogue.

This five-part blog series was developed to provide an overview of broadening participation and broader impacts in the context of NSF’s programs and initiatives. Much of the information included in this series came from the NSF, or NSF-sponsored websites and reports, and is only a representative sample of the information and resources about broadening participation and broader impacts. Links to the resources used were included wherever possible for those seeking more information on a certain topic.

Dr. Cheryl L. Bowker

Associate Director – STEM Center

Cheryl has worked at the STEM Center since 2013 and has evaluated and managed several STEM education projects. Cheryl enjoys impassioned discussions about research, education, and insects. Check out the Staff page for contact details.

Disclaimer: The thoughts, views, and opinions expressed in this post are those of the author and do not necessarily reflect the official policy or position of Colorado State University or the CSU STEM Center. The information contained in this post is provided as a public service with the understanding that Colorado State University makes no warranties, either expressed or implied, concerning the accuracy, completeness, reliability, or suitability of the information. Nor does Colorado State University warrant that the use of this information is free of any claims of copyright infringement. No endorsement of information, products, or resources mentioned in this post is intended, nor is criticism implied of products not mentioned. Outside links are provided for educational purposes, consistent with the CSU STEM Center mission. No warranty is made on the accuracy, objectivity or research base of the information in the links provided.

Cover Image: The underside of artificially selected blue buckeye butterfly (Junonia coenia) wing scales showing their iridescent lamina. Research supported by U.S. National Science Foundation grants DEB 1601815 and DGE 1106400. Credit: Rachel Thayer

Disclaimer: The thoughts, views, and opinions expressed in this post are those of the author and do not necessarily reflect the official policy or position of Colorado State University or the CSU STEM Center. The information contained in this post is provided as a public service with the understanding that Colorado State University makes no warranties, either expressed or implied, concerning the accuracy, completeness, reliability, or suitability of the information. Nor does Colorado State University warrant that the use of this information is free of any claims of copyright infringement. No endorsement of information, products, or resources mentioned in this post is intended, nor is criticism implied of products not mentioned. Outside links are provided for educational purposes, consistent with the CSU STEM Center mission. No warranty is made on the accuracy, objectivity or research base of the information in the links provided.

Get Notified of New Posts

Subscribe and receive email notifications about new posts directly in your inbox.