As mentioned in our first post in the Broadening Participation and Broader Impacts blog series, entitled “A History of Broader Impacts Criterion Within the National Science Foundation”, we detailed the creation of an explicit broader impacts criterion in the merit review process of the National Science Foundation (NSF) proposals. In the 2021 Proposal & Award Policies & Procedures Guide (PAPPG), NSF lists three merit review principles that are to be considered by PIs, NSF reviewers, and NSF program officers, one of which includes:

“Meaningful assessment and evaluation of NSF funded projects should be based on appropriate metrics, keeping in mind the likely correlation between the effect of broader impacts and the resources provided to implement projects.”

NSF goes on to state that PIs are expected to be accountable for carrying out the activities described in the Broader Impacts section of their funded project. “Thus, individual projects should include clearly stated goals, specific descriptions of the activities that the PI intends to do, and a plan in place to document the outputs of those activities”.

In a summary, there needs to be a well-articulated evaluation plan of Broader Impacts activities in NSF proposals. We, at the STEM Center, have spent a decade educating researchers on the importance of evaluations. Our website contains many resources on evaluation, including descriptions, evaluation services, and examples of evaluations. But you don’t have to take our word for it. Advancing Research Impact in Society (ARIS), is an NSF-supported organization that elevates research impacts by providing high-quality resources and professional development opportunities for researchers, community partners, and engagement practitioners. ARIS hosted a recent seminar on Evaluating BI Activities which reiterated the importance of evaluation in NSF grants. Below is a summary of the key points from this webinar.

What is Evaluation?

Evaluation, in broad terms, is a systematic process that involves collecting data based on predetermined questions or issues. Evaluation is done to enhance knowledge or decision-making about a program, process, product, system, or organization. The definition of evaluation used by ARIS is the systematic investigation of the merit (quality), worth (value), or significance (importance) of a program, as most NSF broader impacts activities focus on the creation and implementation of a program.

Evaluations also frequently use the terms formative and summative to describe the type of evaluation being conducted. Formative and summative evaluations include the following characteristics:

Formative:

- Programs are evaluated during development and throughout the implementation

- Provides information on how to revise/improve while in process

- Done for benefit of the implementor

Summative:

- Programs are evaluated upon conclusion

- Done for the benefit of the funder

The Benefits of Evaluation

There are additional benefits of evaluation beyond checking a box on NSF’s proposal requirements. These include:

- Focusing the project

- Clarifies what the program is trying to achieve.

- Creates a foundation for strategic planning

- Improving the project design and implementation

- Identifies and modifies ineffective practices

- Identifies and leverages program strengths

- Tracking measurable project outcomes

- Provides evidence of effectiveness

- Produces credibility and visibility

- Substantiating requests for increased funding

- Provides documentation for performance and annual reports

- Demonstrates program impact to grant providers and other constituents

Designing A Broader Impacts Evaluation

Even if researchers partner with an evaluator on their grant proposal, they should be able to answer these questions about their program.

- Why am I conducting this evaluation?

- What am I measuring?

- Who is involved?

- What is my timeline?

- What kinds of data will I collect? For example, simple quantitative measures, pre-and post-testing to measure gains or changes in attitude. Surveys, interviews, and/or focus groups to examine changes in behavior and perception.

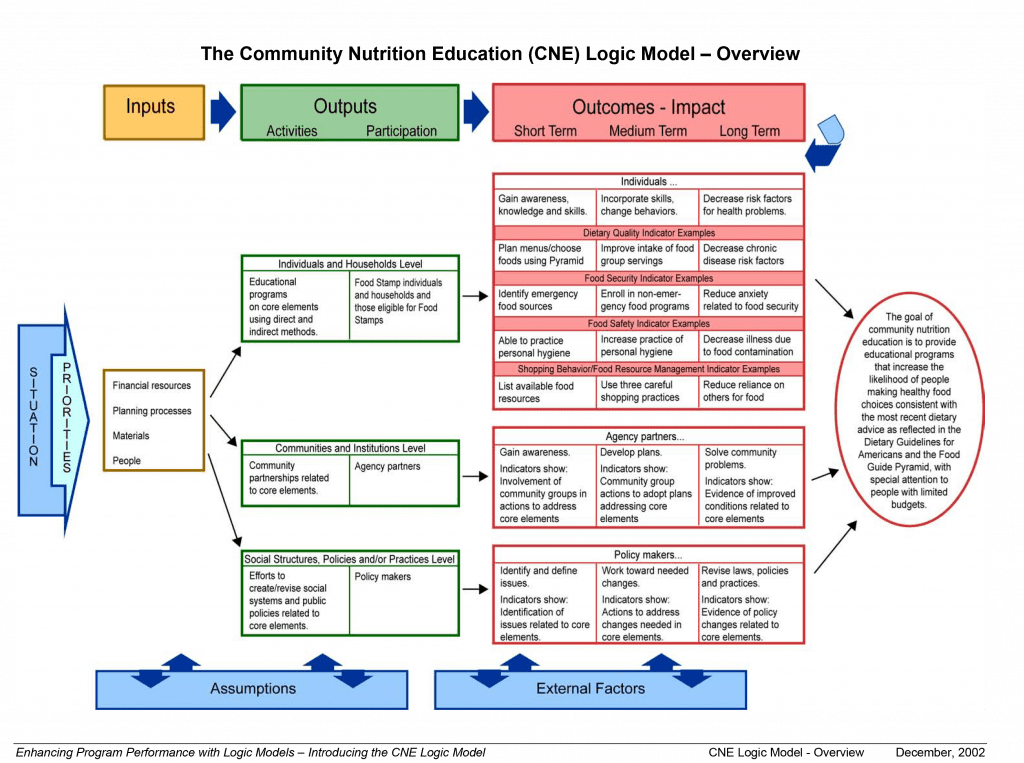

Use A Logic Model

A logic model is a visual representation of your project and is a great way to set clear outcomes for yourself, the evaluator, and the rest of the project team. The University of Wisconsin’s Program Development and Evaluation Center has templates and examples of logic models they developed for their Extension programs.

Overall, the benefits of a logic model include:

- Providing a common language

- Helps differentiate between what we do and results (outcomes)

- Increases understanding about the program

- Guides and helps focus work, which leads to improved planning and management

- Increases intentionality and purpose

- Provides coherence across complex tasks, diverse environments

Keep in mind, for all of its benefits, a logic model is not an evaluation plan, it is an organizational tool.

Frequently Asked Questions About Evaluation

How much evaluation is enough?

Like most things, it depends. Most evaluations are 5-10% of the total proposal budget, but this depends on the type of proposal, and the scope of the Broader Impact activities. If the Broader Impact activities include the research component of the proposal, a more extensive evaluation plan is probably needed (i.e. NSF IUSE). If the Broader Impact activities are separate from the research, and smaller in scope, then a less complex evaluation plan is needed (i.e. NSF CAREER). The key for researchers to know what kind of evaluation is needed and how much it might cost is to work with an evaluator during the proposal phase. Ultimately, enough data needs to be collected to know if your program is successful.

Can you evaluate your own Broader Impact activities?

Yes, a PI can evaluate their own Broader Impact activities, but you might want to consider out-sourcing the evaluation depending on the scope of the project. There is no rule at NSF against evaluating your own activities, but you want to approach the evaluation like any research from an ethical standpoint. The biggest concern is that the evaluation will consume too much of your time or energy.

Where do you go to find evaluation help?

Your own university or college might have a center on campus that helps with or conducts evaluations. These centers might be within a School of Education, Social Work, Psychology, or other social sciences department. If your own organization lacks evaluation services, or you need to have an external evaluator on your project, per program guidelines, you can turn to other University centers, or use the Find an Evaluator feature on the website of the American Evaluation Association. The STEM Center at Colorado State University is also available, and you can Schedule a Consultation with us to discuss your project.

Dr. Cheryl L. Bowker

Associate Director – STEM Center

Cheryl has worked at the STEM Center since 2013 and has evaluated and managed several STEM education projects. Cheryl enjoys impassioned discussions about research, education, and insects. Check out the Staff page for contact details.

Disclaimer: The thoughts, views, and opinions expressed in this post are those of the author and do not necessarily reflect the official policy or position of Colorado State University or the CSU STEM Center. The information contained in this post is provided as a public service with the understanding that Colorado State University makes no warranties, either expressed or implied, concerning the accuracy, completeness, reliability, or suitability of the information. Nor does Colorado State University warrant that the use of this information is free of any claims of copyright infringement. No endorsement of information, products, or resources mentioned in this post is intended, nor is criticism implied of products not mentioned. Outside links are provided for educational purposes, consistent with the CSU STEM Center mission. No warranty is made on the accuracy, objectivity or research base of the information in the links provided.

Disclaimer: The thoughts, views, and opinions expressed in this post are those of the author and do not necessarily reflect the official policy or position of Colorado State University or the CSU STEM Center. The information contained in this post is provided as a public service with the understanding that Colorado State University makes no warranties, either expressed or implied, concerning the accuracy, completeness, reliability, or suitability of the information. Nor does Colorado State University warrant that the use of this information is free of any claims of copyright infringement. No endorsement of information, products, or resources mentioned in this post is intended, nor is criticism implied of products not mentioned. Outside links are provided for educational purposes, consistent with the CSU STEM Center mission. No warranty is made on the accuracy, objectivity or research base of the information in the links provided.

Get Notified of New Posts

Subscribe and receive email notifications about new posts directly in your inbox.